One of the greatest challenges in attempting to monitor the entire planet lies in discerning certainty amidst the chaotic conflicting cacophony that is global journalism. How should a system handle starkly conflicting reports, especially if the very same article offers wildly different assessments?

To the casual news consumer that makes up the majority of news reading, the inevitable errors in the world's journalism each day are a minor annoyance that largely go unnoticed. To machines attempting to distill some sense of order from the global chaos, conflicting factual statements on breaking news events are especially troublesome in attempting to estimate the contours of an event, its potential impact and the confidence in the current public information environment.

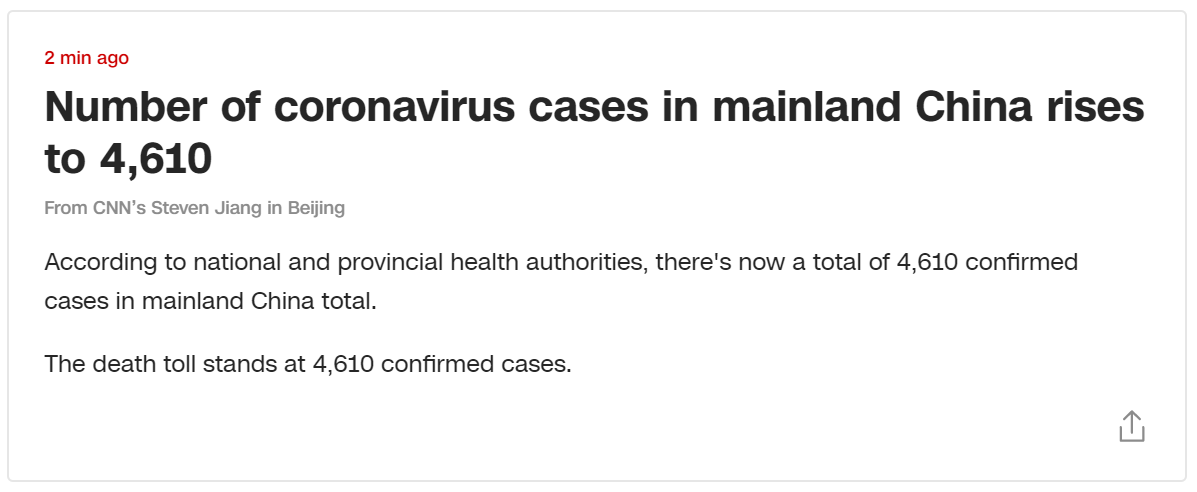

Take the following breaking news report issued by CNN earlier this morning in response to updated statistics just released about the Chinese coronavirus. To an automated count extraction system, this breaking update offers government confirmation of 4,610 "confirmed cases" and goes on to offer that "the death toll stands at 4,610 confirmed cases." A natural language parse of this article might reasonably connect the two statements based on their shared precise number to assume that the "4,610 confirmed cases" refers to an updated death count, reflecting an extraordinarily high mortality rate.

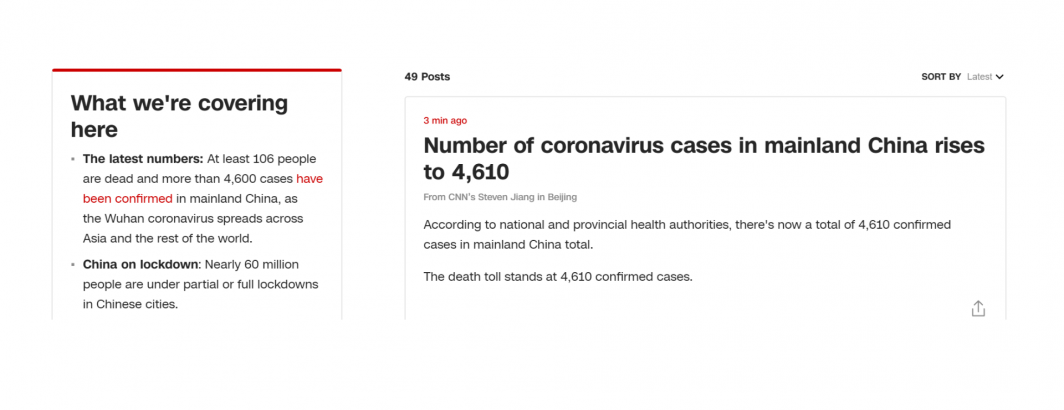

However, if the article in question is examined in the context of other CNN reporting appearing at the same time – in this case the standing fixed inset at the left of the page, it can be seen that at the very same time this breaking news update was issued, other parts of CNN were reporting 106 deaths with 4,600 "cases". To a machine processing this article, does the left-hand inset indicate that the breaking report is in error? Or does the breaking report supersede the previous information and reflect brand new breaking information from the government? There is simply not enough information here to decide. In this case the original report was edited after around 15 minutes to correct the death count, but for realtime monitoring systems, that reflects a quarter of an hour with incorrect information.

Such situations occur myriad times every day all across the world and reflect the chaos that systems like GDELT must attempt to make sense of. Reasoning over such conflicting data streams requires more than simple information extraction and website whitelists. It requires systems that are able to triangulate across sources and reason about what they see.