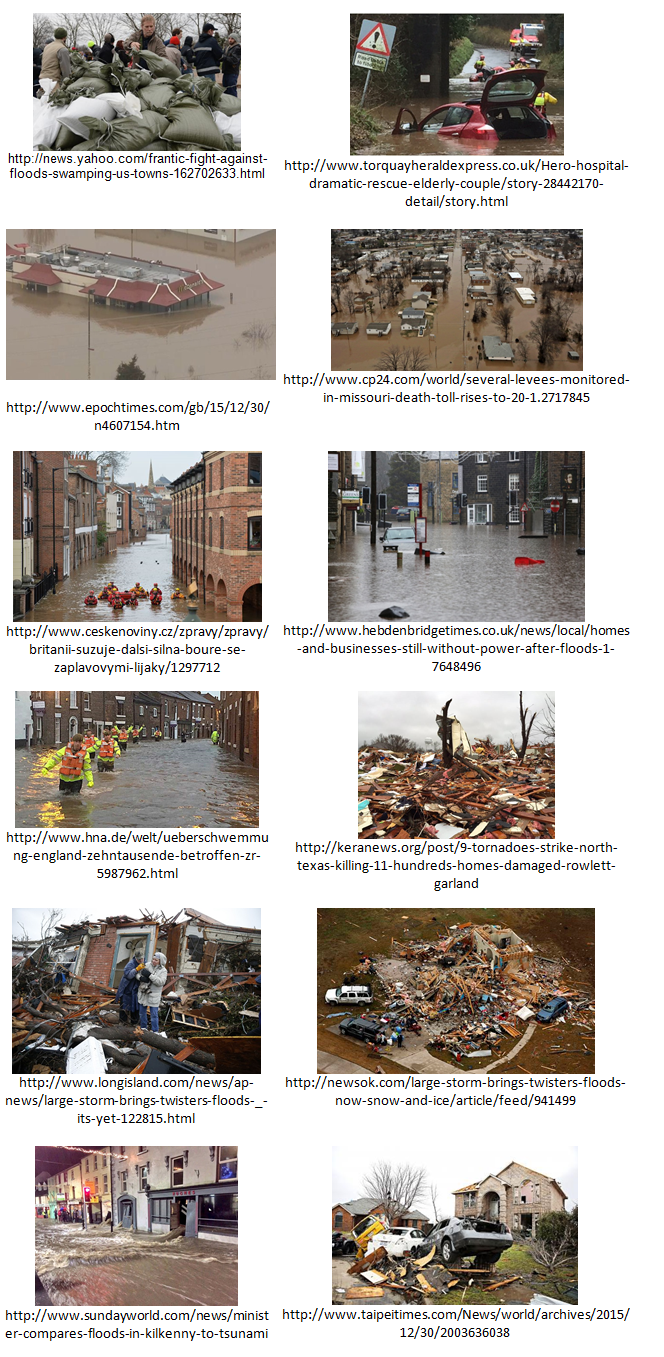

One of the early GDELT APIs we created was a special live stream of all coverage worldwide that GDELT monitors about currently active natural disasters. As soon as a disaster is assigned a GLIDE number we begin a live JSON and CSV feed of key selected indicators derived from global media coverage. One of those feeds is a list of all articles about the disaster that contain images, making it possible for aid organizations to triage and better understand the extend and nature of the damage across the affected area.

One of the challenges, however, lies in the shear volume and speed of the GDELT firehose, which can easily exceed tens or hundreds of thousands of images for a given disaster. Yet, photographs of presidents at podiums, smiling volunteers, aid trucks, and press conferences are of little use for disaster response and waste valuable human time reviewing. What is needed is a fully automated system that can rapidly triage the firehose of available imagery and filter it for only that imagery that contains damage or other relevant insight.

We previously demonstrated the enormous power of the Google Cloud Vision API in triaging imagery of Cyclone Pam's impact on Vanuatu to identify imagery of damage and separate it from general imagery of volunteers and press conferences. To explore how the system might work in a real-world scenario, we used the GDELT Global Knowledge Graph to filter for all coverage of worldwide natural disasters over the last 48 hours and used the Cloud Vision API to further filter to just those articles containing imagery of either flooding or damage. The collage below offers a small sample of the resulting imagery, illustrating the enormous potential of this approach for live triaging of the firehose of global imagery that emerges from a disaster area to aid in better response.