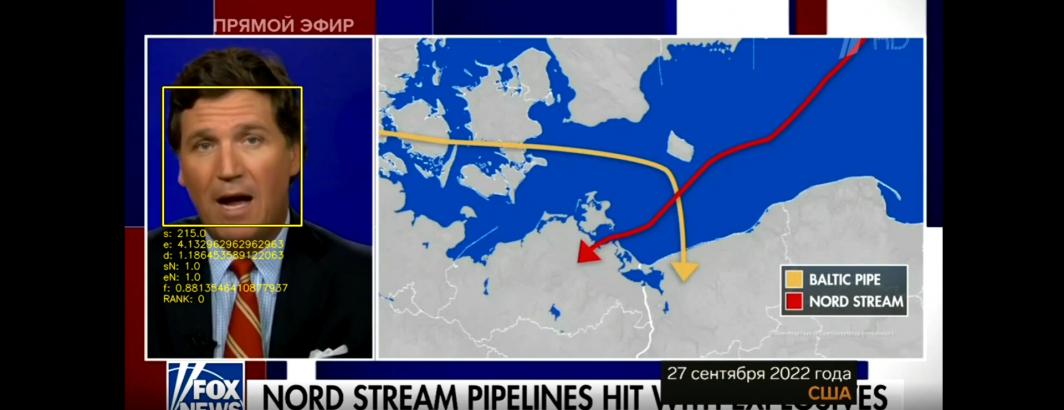

Yesterday we demonstrated how off-the-shelf face detection and similarity matching could be used to catalog all of the excerpted Tucker Carlson clips in a single Russian television news broadcast. How might we scale this up to scanning an entire week and a half for excerpts from his show? Importantly, as an extremely high profile American public figure whose broadcasts are being used by Russian state media to advance its narratives on its invasion of Ukraine, there is compelling public interest in better understanding how external narratives are being woven into the official Russian narration of the war.

The Visual Explorer works by extracting one frame every four seconds from each broadcast, converting it into a fixed-interval thumbnail grid that captures the narrative visual arc of each broadcast. The full-resolution versions of the images from the thumbnail grid are available as a downloadable ZIP file for each broadcast, creating "ngrams for video" that enable at-scale non-consumptive computational visual analysis of television news.

Using the off-the-shelf open "facedetect" tool, we can rapidly annotate a television news broadcast by cataloging all of the places where there are visible human faces. That same tool has an additional feature that allows a known face to be compared against all of the detected faces, with faces that are above a certain threshold of similarity flagged. In this case, rather than true facial recognition, which utilizes sophisticated facial landmark analysis, this is a more rudimentary image similarity assessment on the cropped facial regions. It also does not associate a face with an identity – it is simply an example of "find more like this" similarity matching like a reverse image search. Yet, despite its more basic approach, it offers the ability to scan a broadcast for a specific face and catalog where it appears with remarkable accuracy.

How might be apply the single-broadcast workflow from yesterday to an entire week and a half of Russian television news?

First, we'll need to install three libraries: facedetect for face detection, GNU parallel to run our processing in parallel across a single machine or cluster of machines and jq to extract selected fields from JSON:

apt-get -y install facedetect apt-get -y install parallel apt-get -y install jq

Now we'll compile the list of dates we wish to analyze. In this case we are going to examine from October 1st to early the morning of October 11th:

#make date list... start=20221001; end=20221011; while [[ ! $start > $end ]]; do echo $start; start=$(date -d "$start + 1 day" "+%Y%m%d"); done > DATES

Now we'll use that list of dates to fetch all of the JSON inventory files from the Visual Explorer for those days, which will allow us to compile the list of IDs for all of the broadcasts from those channels for those days:

#get the inventory files

mkdir JSON

time cat DATES | parallel --eta 'wget -q https://storage.googleapis.com/data.gdeltproject.org/gdeltv3/iatv/visualexplorer/RUSSIA1.{}.inventory.json -P ./JSON/'

time cat DATES | parallel --eta 'wget -q https://storage.googleapis.com/data.gdeltproject.org/gdeltv3/iatv/visualexplorer/RUSSIA24.{}.inventory.json -P ./JSON/'

time cat DATES | parallel --eta 'wget -q https://storage.googleapis.com/data.gdeltproject.org/gdeltv3/iatv/visualexplorer/NTV.{}.inventory.json -P ./JSON/'

time cat DATES | parallel --eta 'wget -q https://storage.googleapis.com/data.gdeltproject.org/gdeltv3/iatv/visualexplorer/1TV.{}.inventory.json -P ./JSON/'

rm IDS; find ./JSON/ -depth -name '*.json' | parallel --eta 'cat {} | jq -r .shows[].id >> IDS'

wc -l IDS

1121

In all, there are 1,121 broadcasts across those 4 channels over those 11 days. Now we'll take that list of IDs and download all of the ZIP files for them and unpack them to their underlying JPG files. Note that you may wish to use a RAM or SSD disk for this, since this will yield a vast number of small files:

#download and unpack them all...

mkdir IMAGES

time cat IDS | parallel --eta 'wget -q https://storage.googleapis.com/data.gdeltproject.org/gdeltv3/iatv/visualexplorer/{}.zip -P ./IMAGES/'

time find ./IMAGES/ -depth -name '*.zip' | parallel --eta 'unzip -n -q -d ./IMAGES/ {} && rm {}'

time find ./IMAGES/ -depth -name "*.jpg" | wc -l

927588

In the end, this yields 927,588 frames that we will need to scan. We download a sample Tucker Carlson screen grab from the web and use facedetect to compare against all 927,588 frames individually, using GNU parallel to run across all processors on our VM:

#and scan them all for carlson faces

wget https://storage.googleapis.com/data.gdeltproject.org/blog/2022-tv-news-visual-explorer/2022-tve-facedetect-scan-tuckerface.png

rm MATCHING.TXT.tmp

time find ./IMAGES/ -depth -name "*.jpg" | parallel --resume --joblog ./PAR.LOG --eta 'facedetect -q --search-threshold 40 -s ./2022-tve-facedetect-scan-tuckerface.png {} && echo {} >> MATCHING.TXT.tmp'

sort MATCHING.TXT.tmp > MATCHING.TXT

On our 60-core VM this took 10 hours and 40 minutes using a RAM disk to remove IO as a limiting factor.

You can see the final results:

In all, this yielded 319 frames (remember that each represents 4 seconds of airtime) containing a match for Tucker Carlson across the 927,588 examined frames, which works out to as much as 21 minutes of airtime – around 0.03% of the total airtime across these four Russian channels over a week and a half! In reality, since broadcasts are sampled every 4 seconds, each of those 319 frames does not necessarily represent 4 full seconds of Tucker Carlson (since he might have been featured only for 1-3 seconds of that 4 second interval), but this at least offers a rough estimate of his screen time. There may also be false positives that reduce this total. In all, there were 75 distinct clips across 55 broadcasts.

To collapse this per-frame list into the per-clip list seen at the bottom of this post, we used the following Perl script:

#!/usr/bin/perl

use POSIX qw(mktime);

#get our conversion to UTC (in case the local system has a non-UTC timezone set)...

$tz = (localtime time)[8] * 60 - mktime(gmtime 0) / 60; $tzhour = $tz / 60; $tzmin = abs($tz) % 60;

open(FILE, $ARGV[0]);

while(<FILE>) {

($ID, $FRAME) = $_=~/.*\/(.*?)\-(\d+)\.jpg/;

if ($ID ne $LASTID || ($FRAME - $LASTFRAME) > 4) {

($CHAN) = $ID=~/^(.*?)_\d\d\d\d/;

($year, $mon, $day, $hour, $min, $sec) = $ID=~/(\d\d\d\d)(\d\d)(\d\d)_(\d\d)(\d\d)(\d\d)/;

$timestamp = mktime( $sec, $min, $hour, $day, $mon-1, $year-1900 ) + ( ($FRAME - 1) * 4);

$timestamp += ($tzhour * 60 * 60) - ($tzmin * 60);

($sec,$min,$hour,$mday,$mon,$year,$wday,$yday,$isdst) = localtime($timestamp); $year+=1900; $mon++; $day+=0; $hour+=0;

print "<li><a href=\"https://api.gdeltproject.org/api/v2/tvv/tvv?id=$ID&play=$timestamp\">$CHAN: $mon/$day/$year $hour:$min:$sec UTC (Frame: $FRAME)</A></li>\n";

}

$LASTID = $ID;

$LASTFRAME = $FRAME;

$UNIQ_IDS{$ID}++;

}

close(FILE);

$cnt = scalar(keys %UNIQ_IDS); print "Found $cnt IDs...\n";

Most importantly, the facedetect tool only performs basic image similarity comparison, not the full-fledged facial landmark comparison that a true facial recognition system would perform, so it will only detect cases where Tucker Carlson is facing primarily directly towards the camera in a studio environment, as he does for the majority of the airtime of his show. A more robust analysis would likely use more sophisticated tools to more precisely inventory his appearances and capture instances where his head is tilted or occluded or where he appears outside of a studio environment, such as leading a rally or being interviewed on other shows. There may also be false positive matches, given the simplicity of the matching algorithm used here.

In the end, we've demonstrated that using the Visual Explorer preview images with the off-the-shelf "facedetect" tool, we can scan a week and a half of Russian television across 4 channels totaling 1,030 hours of airtime in all with a single 60-core CPU-only VM in just under 11 hours! Simply by scheduling this workflow as a cronjob and using the last 72 hours as our DATE list, we could repeat this pipeline every 30 minutes to scan Russian television in near-realtime!

You can see the complete list of clips below:

- 1TV: 10/4/2022 7:55:56 UTC (Frame: 002415)

- 1TV: 10/4/2022 10:40:4 UTC (Frame: 002177)

- 1TV: 10/4/2022 13:50:16 UTC (Frame: 002255)

- 1TV: 10/5/2022 2:15:32 UTC (Frame: 000234)

- 1TV: 10/5/2022 4:41:56 UTC (Frame: 000855)

- 1TV: 10/5/2022 5:30:56 UTC (Frame: 000240)

- 1TV: 10/5/2022 5:31:20 UTC (Frame: 000246)

- 1TV: 10/5/2022 6:8:52 UTC (Frame: 000809)

- 1TV: 10/5/2022 14:57:48 UTC (Frame: 000193)

- 1TV: 10/6/2022 21:19:0 UTC (Frame: 001111)

- 1TV: 10/6/2022 21:19:32 UTC (Frame: 001119)

- 1TV: 10/6/2022 10:33:32 UTC (Frame: 002079)

- 1TV: 10/6/2022 14:22:0 UTC (Frame: 000331)

- 1TV: 10/6/2022 14:22:44 UTC (Frame: 000342)

- 1TV: 10/7/2022 5:25:24 UTC (Frame: 000157)

- 1TV: 10/7/2022 5:26:4 UTC (Frame: 000167)

- 1TV: 10/7/2022 11:13:20 UTC (Frame: 000201)

- 1TV: 10/7/2022 14:15:40 UTC (Frame: 000236)

- 1TV: 10/7/2022 21:24:20 UTC (Frame: 001716)

- 1TV: 10/9/2022 14:30:0 UTC (Frame: 000451)

- NTV: 10/2/2022 13:2:12 UTC (Frame: 000034)

- NTV: 10/5/2022 3:3:40 UTC (Frame: 000056)

- NTV: 10/5/2022 3:29:36 UTC (Frame: 000445)

- NTV: 10/6/2022 7:1:0 UTC (Frame: 000016)

- NTV: 10/7/2022 1:6:16 UTC (Frame: 000095)

- NTV: 10/8/2022 3:1:52 UTC (Frame: 000029)

- NTV: 10/9/2022 12:27:0 UTC (Frame: 000406)

- NTV: 10/11/2022 3:22:40 UTC (Frame: 000341)

- NTV: 10/11/2022 6:12:12 UTC (Frame: 000184)

- NTV: 10/11/2022 9:16:16 UTC (Frame: 000245)

- RUSSIA1: 10/2/2022 15:13:4 UTC (Frame: 000197)

- RUSSIA1: 10/3/2022 17:48:4 UTC (Frame: 001922)

- RUSSIA1: 10/4/2022 4:50:12 UTC (Frame: 000304)

- RUSSIA1: 10/4/2022 6:16:0 UTC (Frame: 001591)

- RUSSIA1: 10/4/2022 6:16:32 UTC (Frame: 001599)

- RUSSIA1: 10/4/2022 7:57:40 UTC (Frame: 000041)

- RUSSIA1: 10/4/2022 8:26:56 UTC (Frame: 000480)

- RUSSIA1: 10/4/2022 10:57:0 UTC (Frame: 000406)

- RUSSIA1: 10/4/2022 12:11:32 UTC (Frame: 001524)

- RUSSIA1: 10/4/2022 14:6:44 UTC (Frame: 000027)

- RUSSIA1: 10/4/2022 15:40:52 UTC (Frame: 000314)

- RUSSIA1: 10/4/2022 17:0:28 UTC (Frame: 001508)

- RUSSIA1: 10/5/2022 6:20:56 UTC (Frame: 001665)

- RUSSIA1: 10/5/2022 11:45:16 UTC (Frame: 001130)

- RUSSIA1: 10/5/2022 12:14:20 UTC (Frame: 001566)

- RUSSIA1: 10/5/2022 12:17:52 UTC (Frame: 001619)

- RUSSIA1: 10/5/2022 16:49:40 UTC (Frame: 001346)

- RUSSIA1: 10/5/2022 16:54:12 UTC (Frame: 001414)

- RUSSIA1: 10/6/2022 4:43:52 UTC (Frame: 000209)

- RUSSIA1: 10/6/2022 14:7:44 UTC (Frame: 000042)

- RUSSIA1: 10/6/2022 15:12:0 UTC (Frame: 000781)

- RUSSIA1: 10/7/2022 4:34:24 UTC (Frame: 000517)

- RUSSIA1: 10/9/2022 14:17:52 UTC (Frame: 001169)

- RUSSIA1: 10/9/2022 14:21:48 UTC (Frame: 001228)

- RUSSIA1: 10/10/2022 16:31:36 UTC (Frame: 000100)

- RUSSIA1: 10/11/2022 10:57:0 UTC (Frame: 000406)

- RUSSIA24: 10/2/2022 18:16:44 UTC (Frame: 000252)

- RUSSIA24: 10/4/2022 10:47:28 UTC (Frame: 001118)

- RUSSIA24: 10/4/2022 12:44:4 UTC (Frame: 000662)

- RUSSIA24: 10/4/2022 14:16:48 UTC (Frame: 001123)

- RUSSIA24: 10/4/2022 16:25:56 UTC (Frame: 000390)

- RUSSIA24: 10/4/2022 19:2:0 UTC (Frame: 000286)

- RUSSIA24: 10/4/2022 19:9:4 UTC (Frame: 000392)

- RUSSIA24: 10/4/2022 19:9:4 UTC (Frame: 000002)

- RUSSIA24: 10/4/2022 20:27:16 UTC (Frame: 001175)

- RUSSIA24: 10/5/2022 22:20:56 UTC (Frame: 000315)

- RUSSIA24: 10/5/2022 19:15:16 UTC (Frame: 000095)

- RUSSIA24: 10/5/2022 20:14:36 UTC (Frame: 000985)

- RUSSIA24: 10/5/2022 20:21:8 UTC (Frame: 001083)

- RUSSIA24: 10/6/2022 12:33:44 UTC (Frame: 000507)

- RUSSIA24: 10/6/2022 13:39:16 UTC (Frame: 000560)

- RUSSIA24: 10/6/2022 15:39:24 UTC (Frame: 000067)

- RUSSIA24: 10/6/2022 18:14:32 UTC (Frame: 001119)

- RUSSIA24: 10/9/2022 17:19:56 UTC (Frame: 001200)

Of those 75 clips, 17 ended up being false positives:

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA24_20221005_230900_Vecher_s_Vladimirom_Solovevim&play=1665015668

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA24_20221005_230900_Vecher_s_Vladimirom_Solovevim&play=1665015276

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA24_20221005_230900_Vecher_s_Vladimirom_Solovevim&play=1665011716

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA24_20221004_224300_Spetsialnii_reportazh&play=1664924520

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221003_194000_Voskresnii_vecher_s_Vladimirom_Solovevim&play=1664833684

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221005_192000_Vecher_s_Vladimirom_Solovevim&play=1665002980

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221005_192000_Vecher_s_Vladimirom_Solovevim&play=1665003252

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221006_083000_60_minut&play=1665045832

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221006_182000_Chaiki&play=1665083520

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221007_080000_Vesti&play=1665131664

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221009_170000_Vesti_nedeli&play=1665339708

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221010_202500_Vecher_s_Vladimirom_Solovevim&play=1665433896

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=RUSSIA1_20221011_143000_60_minut&play=1665500220

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=NTV_20221008_070000_Segodnya&play=1665212512

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=NTV_20221007_050000_Segodnya&play=1665119176

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=NTV_20221005_070000_Segodnya&play=1664954976

- https://api.gdeltproject.org/api/v2/tvv/tvv?id=NTV_20221005_070000_Segodnya&play=1664953420