With the arson attack on the Saudi Arabian embassy in Iran last night, we wanted to see how well the Google Cloud Vision API could filter all of the imagery emerging from coverage of the situation to find just imagery featuring fire or fire damage. The results below show just how powerful the coupling of the textual GKG, with its mass machine translation, multilingual geocoding, and fulltext analysis, is with the deep learning image recognition of the Google Cloud Vision API.

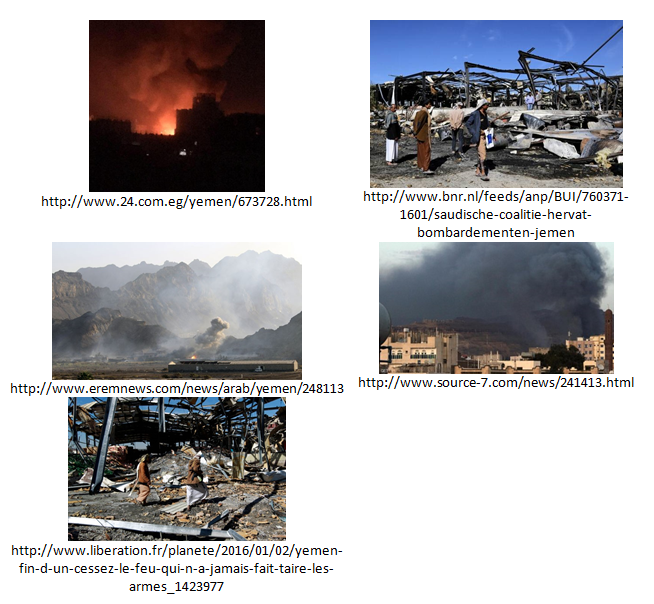

With just a single line of SQL in Google BigQuery (see the bottom of this post), we were able to ask for all images of fire or fire damage found in articles mentioning a location anywhere in Saudi Arabia or Iran, and compile the list of images below in just a few seconds. It turns out that there were actually two different topics for which fire imagery was returned: the protests and Saudi Arabia's bombing runs on Yemen. We have separated out the two below by grouping imagery found in articles that also mentioned Yemen separately. Keep in mind that we are currently just processing a minute fraction of global news imagery as we slowly scale up this prototype experiment, but as these early results stand testament to, the results are nothing short of extraordinary.

SAUDI ARABIA PROTESTS

YEMEN BOMBINGS

TECHNICAL DETAILS

Since both the VGKG and GKG are housed in Google BigQuery, the images above were found through the simple query:

SELECT DocumentIdentifier, ImageURL, Labels, GeoLandmarks FROM [gdelt-bq:gdeltv2.cloudvision] WHERE Labels LIKE '%fire<%' AND DocumentIdentifier in ( SELECT DocumentIdentifier FROM [gdelt-bq:gdeltv2.gkg] WHERE DATE > 20160100000000 AND (V2Locations LIKE "%Saudi Arabia%" OR V2Locations LIKE "%Iran%"))