Continuing our story segmentation experiments, we used Gemini 2.5 Flash Thinking to "watch" 16 years of three English language 24/7 cable news channels by reading their closed captioning and asked it to compile an annotated catalog index of every single story it found in all that news coverage. In total, Gemini examined 404,443 broadcasts totaling nearly half a million hours covering the world's biggest stories of the past 16 years.

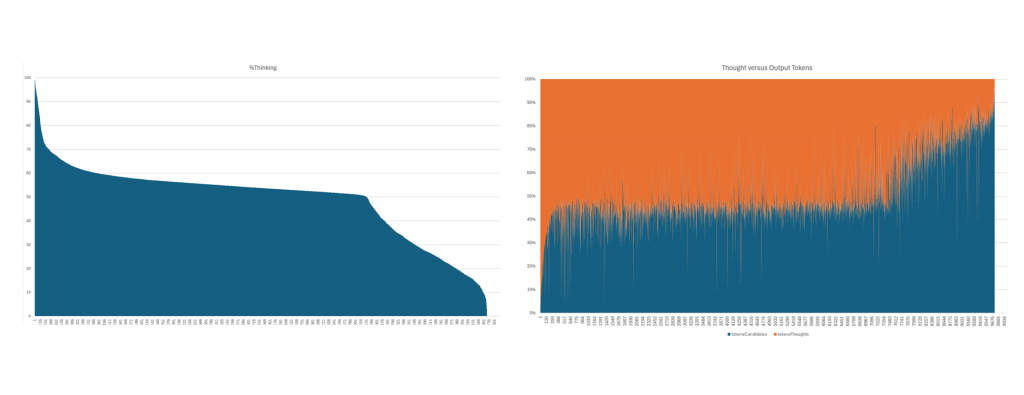

In all, those 3 channels across the 16 years contained 2.3B spoken words (32.8B characters) yielding 11.99B input tokens (around 2.73 input characters per input token), which led to 4B output tokens supported by 4.7B thinking tokens. In all, Gemini 2.5 Flash Thinking cataloged 3.1M distinct stories across 10.1M clips, totaling 61.5M topics, 31.5M entities (an entity every 101 words), 30.2M emotions and 29.2M locations (a location mention every 109 words).

We used the standard off-the-shelf public Gemini 2.5 Flash Thinking model without any modifications. No data was used to train, tune or otherwise contribute to any model: we used Gemini only to create an index of each broadcast.

Incredibly, asking Gemini 2.5 Flash to "watch" half a million hours of television news through 2.3B words of closed captioning and to catalog and index every story described within cost just $12,783. You read that correctly: cataloging more than 3.1 million stories from half a million hours of television news coverage cost just $12,783, demonstrating the immense potential of advanced models to assist with cataloging and indexing vast collections.